In an earlier article in this series, we split “agentic AI in production” into four chunks: workflows, orchestration, guardrails, and observability. This one is about orchestration – the control layer you only notice when it’s missing.

Because once an agent is allowed to touch real systems, the boring stuff starts deciding outcomes: a timeout here, a retry there, two triggers for the same intent, a human who doesn’t approve until tomorrow. Automation can kick off a step; orchestration is what keeps the whole run stitched together across systems, waits, and failure modes.

We’re going to stay focused on the mechanics that make runs survivable: how you route work, how you retry without duplicating side effects, where approvals belong, and what you capture in the audit trail so you can later answer “who changed what, and why” without guessing.

Agentic AI Orchestration: Why a Control Layer Exists

Once orchestration is in place, the control layer becomes essential for keeping systems out of trouble.

The moment an agent can write to systems of record, you’re running a long-lived process. It might pause for approval. It might hit a rate limit. A tool call might time out. The same request might get triggered twice. If you don’t persist state and handle those cases deliberately, you’ll get duplicates, partial updates, and runs you can’t confidently explain later.

Workflow engines have been dealing with this class of problems for years under the name durable execution: save progress so a run can pause, resume, and recover; retry with backoff when failures are transient; wait for humans without “keeping the whole thing in someone’s head.”

What “agents in production” break without control

Orchestration vs Automation (and Why the Difference Matters)

Once you start treating agent runs as long-lived processes, the terminology tends to get sloppy in the same way the implementation does. “Automation” and “orchestration” get used interchangeably, right up until something fails and you realize you’ve been talking about two different problems.

Automation is usually a single repeatable action. Orchestration is what coordinates multiple actions across systems (and sometimes people) into one end-to-end run without losing track of where you are or what already happened.

Why this matters for agentic systems

Agents don’t run one step; they run a chain across systems, waits, and retries. If you treat that chain as “just automation,” you lose the basics: where the run is, what already happened, what’s safe to retry, and how to reconstruct it later. Orchestration is the layer that coordinates the whole process end to end (including manual steps) and keeps it resumable via durable execution – state that survives crashes and can replay/resume cleanly.

The Control Layer Overview

Here’s the simplest way we’ve found to explain it without turning it into a diagram contest: the model can decide, your tools can do, and the control layer is what makes the run survive reality.

It holds the run’s “memory” (what step we’re on, what already happened, what we’re waiting for), and it’s what lets a run pause and later pick up where it left off. Temporal describes this via event history and replay: decisions/events get persisted, and a worker can rebuild workflow state by replaying that history after a crash.

The control layer usually ends up owning four things:

- Routing: given what just happened, what’s next (continue, branch, ask for missing info, escalate, stop).

- Retries: tool calls fail; you retry with backoff/caps and proper error handling (Step Functions documents Retry/Catch as first-class state machine behavior).

- Approvals: some steps are “wait for a human,” and that wait has to be durable; orchestration in the process world explicitly includes manual tasks alongside automated ones.

- Audit trail: the evidence log. NIST’s glossary definition is blunt: an audit trail is a chronological record that reconstructs the sequence of activities around an operation/event.

Where it sits is pretty literal:

Triggers (Slack/email/webhook) → Workflow definition (steps/rules) → Control layer runtime (state + routing + retries + approvals + audit events) → Workers/tool adapters → Systems of record (Jira/CRM/CMS/billing/Git)

It sits between the model and Jira, determining whether ‘this should finish cleanly’ actually happens.

Routing

Routing is where things usually go sideways. Not because the agent “can’t reason,” but because production gives you half the input, a flaky API, and a system that’s in a weird state today.

Routing should prioritize steps that remain safe under messy, real-world conditions.

Intent comes first. Before we touch anything, we should be able to say what change we’re trying to make: create a ticket, update a CRM record, draft a refund, publish something. If we can’t say that clearly, we shouldn’t write. We should ask for the missing piece or hand it to a human – anything except guessing.

From there, routing usually depends on four signals:

A production workflow must persist across delays, restarts, and external dependencies, with execution state independent of any single worker.

Fallback paths and escalation logic

Routing isn’t finished until we decide where the run goes when the happy path breaks.

Fallbacks are for cases where the run can still make progress without doing something risky.

If CRM enrichment fails, we don’t block the whole run by default. We can continue with the minimum safe payload (read-only or partial output), or park the run for later enrichment. State machines like AWS Step Functions model this directly: errors can be caught and routed to a handler instead of terminating the execution.

Escalation is for boundaries we shouldn’t cross automatically.

When required information is missing, retries exceed limits, source data conflicts, or a high-risk action is detected, execution is routed to a human-owned queue with full context. Since production orchestration routinely includes manual steps, that path must be defined upfront as part of the workflow.

When routing is done well, runs don’t fail mysteriously. They land in a small set of states we can operate: done, waiting, retrying, needs approval, needs human, blocked. That’s how we scale runs without constant manual oversight.

Retries

Retries are where “works in a demo” turns into “creates incidents.” We need them because systems fail. We need restraint because repeating a write is how we create duplicates and inconsistent state.

What to retry vs stop

Retry when it’s probably transient:

- timeouts

- connection resets / network hiccups

- 429 rate limits

- temporary 5xx responses

- dependency is degraded but not broken

Stop (or escalate) when it’s not going to fix itself:

- 4xx “you sent a bad request” (invalid schema, missing required fields)

- permission/authorization failures

- business-rule failures (record is locked, state transition not allowed)

- “we don’t know what happened” after a write attempt (unknown outcome)

That last one matters. If a write might have succeeded but we didn’t get a clean response, retrying the same write is how we double-apply side effects. In that situation, we route to verify-first (read back state / check dedupe key) before we do anything else.

Backoff, limits, idempotency, and “don’t loop forever” guardrails

A simple rule we can keep in our heads: retries are for availability, not for correctness. If correctness is in doubt (unknown write outcome, conflicting state, missing required data), we stop and verify or escalate.

Approvals

Approvals aren’t there to slow things down. They’re there because some actions are the kind you only want to do once, and only if someone responsible is happy to put their name on it.

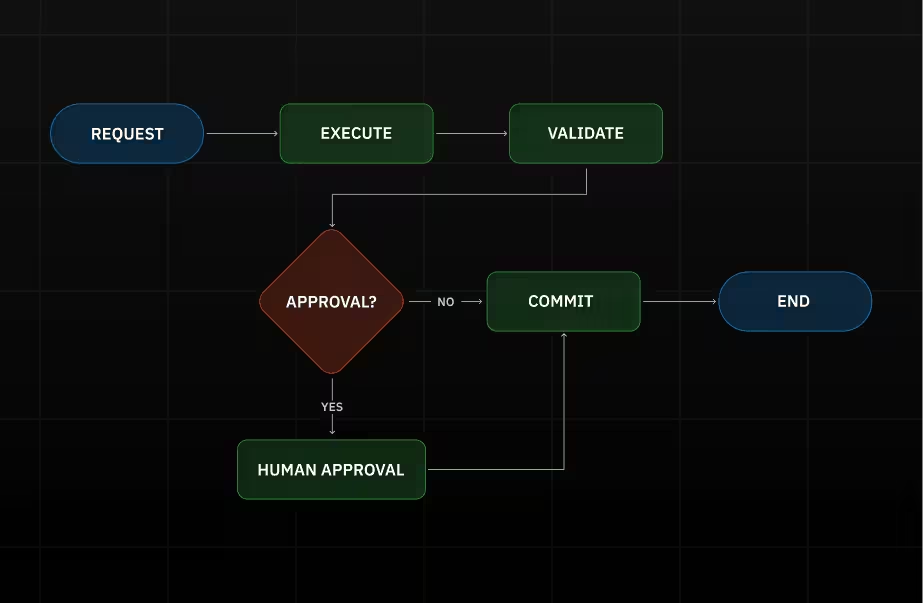

Human-in-the-loop gates for high-impact actions

If an agent is about to:

- move money,

- change access,

- delete or bulk-edit data,

- send something to a customer,

- touch production,

…we don’t want “the model felt confident.” We want a decision.

Execution needs to pause safely, persist state while idle, and resume deterministically without context loss or duplicate actions.

What we show at approval time should be dead simple:

- what will be changed (exact payload / diff)

- where it will be changed (system + record link)

- why we think this is the right move (the evidence we used)

Role-based permissions and decision checkpoints

Approvals also aren’t generic. Finance shouldn’t approve access changes. Security shouldn’t approve refunds. Content leads shouldn’t approve production writes.

So we treat approvals as scoped checkpoints:

- a specific person/role can approve a specific action,

- approval unlocks that exact write, not a wider set of capabilities,

- the decision gets recorded (who, when, what they approved), because later someone will ask. That’s what an audit trail is for: a chronological record that lets you reconstruct what happened.

When implemented correctly, approvals control high-risk actions without slowing the rest of the system.

Audit Trail

An audit trail provides a defensible record of system behavior over time. According to NIST, an audit trail is a chronological record that allows reconstruction and examination of events surrounding a security-relevant operation, from initiation through outcome.

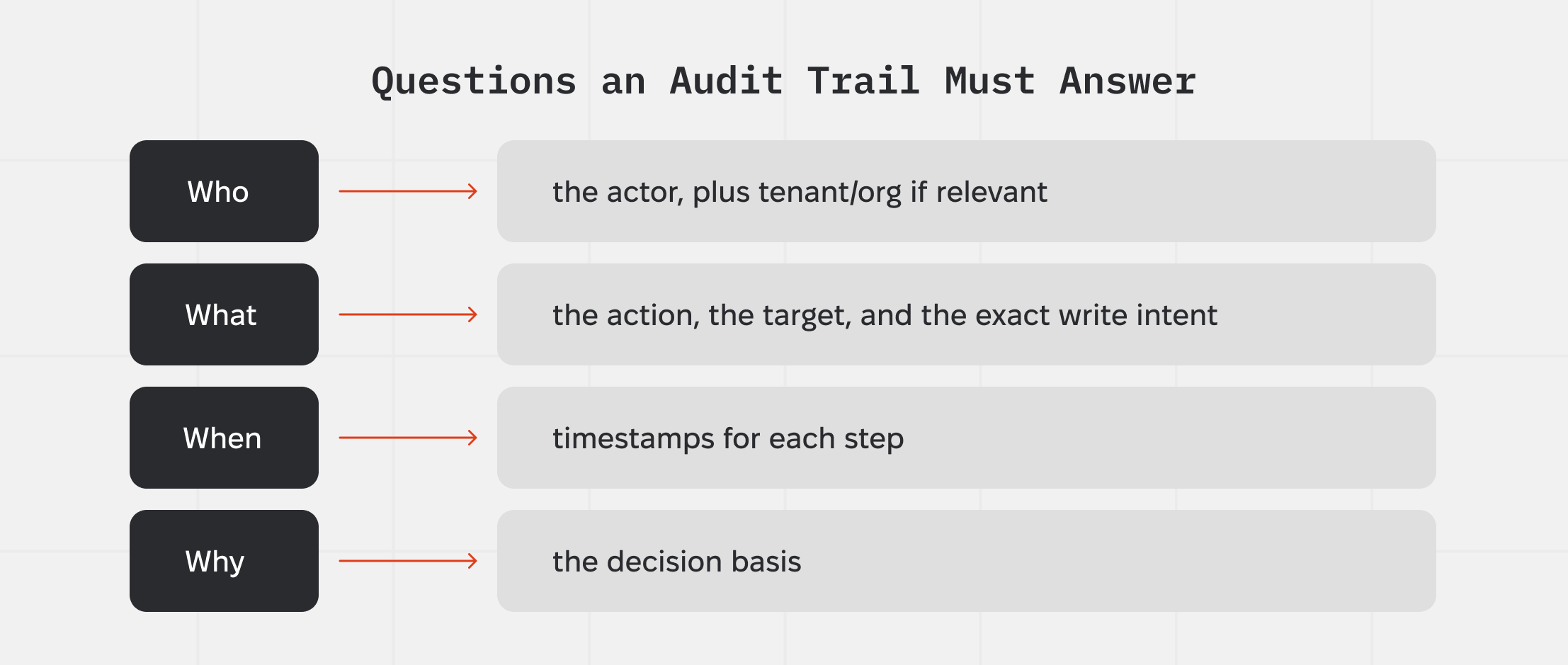

What must be recorded: who / what / when / why

For agent runs, the minimum useful trail usually includes:

- Who: the actor (human approver, service identity, delegated account), plus tenant/org if relevant

- What: the action (tool call + operation), the target (system + record IDs), and the exact write intent (payload/diff or a hashed snapshot)

- When: timestamps for each step (start, attempts, approval, write, verification)

- Why: the decision basis (inputs used, policy/validation outcomes, and the routing decision that led to the write)

An audit trail must answer what changed, by whom, and on what basis; without that, logs only support debugging.

Traceability and tamper resistance

Traceability is mostly discipline: every run gets a run_id, every step/tool call gets a step_id, and we carry correlation IDs into downstream systems so we can join the dots later.

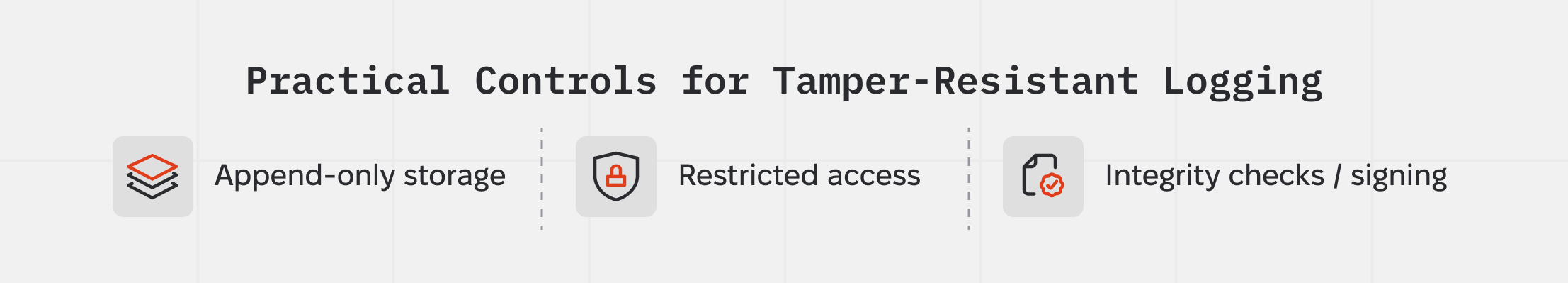

Tamper resistance is about treating logs as evidence. OWASP calls out log integrity as a common failure mode – logs that can be altered or deleted don’t help in investigations. Practical controls look like:

- Append-only storage (write once, don’t edit) and centralized logging (not “only on the box”)

- Restricted access (least privilege to read; even fewer people can delete)

- Integrity checks / signing where possible. CloudTrail’s log file integrity validation is a clear example: hashes + digital signatures to detect modification or deletion after delivery.

Audit-ready runs for compliance and post-incident review

Audit-ready means a run can be reconstructed without guesswork. Inputs, decisions, tool calls, approvals, and final writes are all captured and linked through IDs and timestamps. NIST notes that audit trails are used to reconstruct events after incidents and to assess impact and root cause.

In practice, this means being able to retrieve a single run and reconstruct the full chain: the trigger, attempted actions, approvals, resulting changes, and post-execution verification.

Conclusion

Operability depends on two conditions: a complete execution record and a clean recovery path.

The control layer exists to enforce both, making failures traceable and actionable rather than ad hoc.

.png)

.png)