“ I used two different development firms before finally finding MEV.

We have a video advertising technology platform --- with over 140 repositories at GitHub --- so it was (and is) a lot of work, but the MEV team has been amazing.

They, unlike the other firms, document everything with a clear plan, weekly calls, and they do what they say they will do --- on time and on budget. They also came to me with solutions and creative ideas. Their team is pragmatic, hard working, diligent, and extremely competent.

I cannot say enough good things about them. “

see more...

C.J. Bowden

“ Like an extension of our team, we booked daily calls throughout the duration of our initial contract to build a B2B Platform facing senior leaders in Media; I say initial as we fully intend to continue our engagement. Thoughtful, experienced, consultative and incredibly efficient, I would not hesitate to recommend MEV (we worked with Olesia, Bogdan and Max with Alex guiding the business side always with professionalism and candor). If you're building something, talk to MEV and then hire them. “

see more...

Jason Greene

“ MEV has a deep desire to understand what a platform does, what functionality it delivers, and how it creates value for customers. That allows MEV to develop technology solutions that not only solve complex challenges, but also build a platform that is scalable and extensible. As we’ve grown to 400 hotels, our system’s performance is keeping pace, and our integrations meet the demands of our customers. “

see more...

Mike Medsker

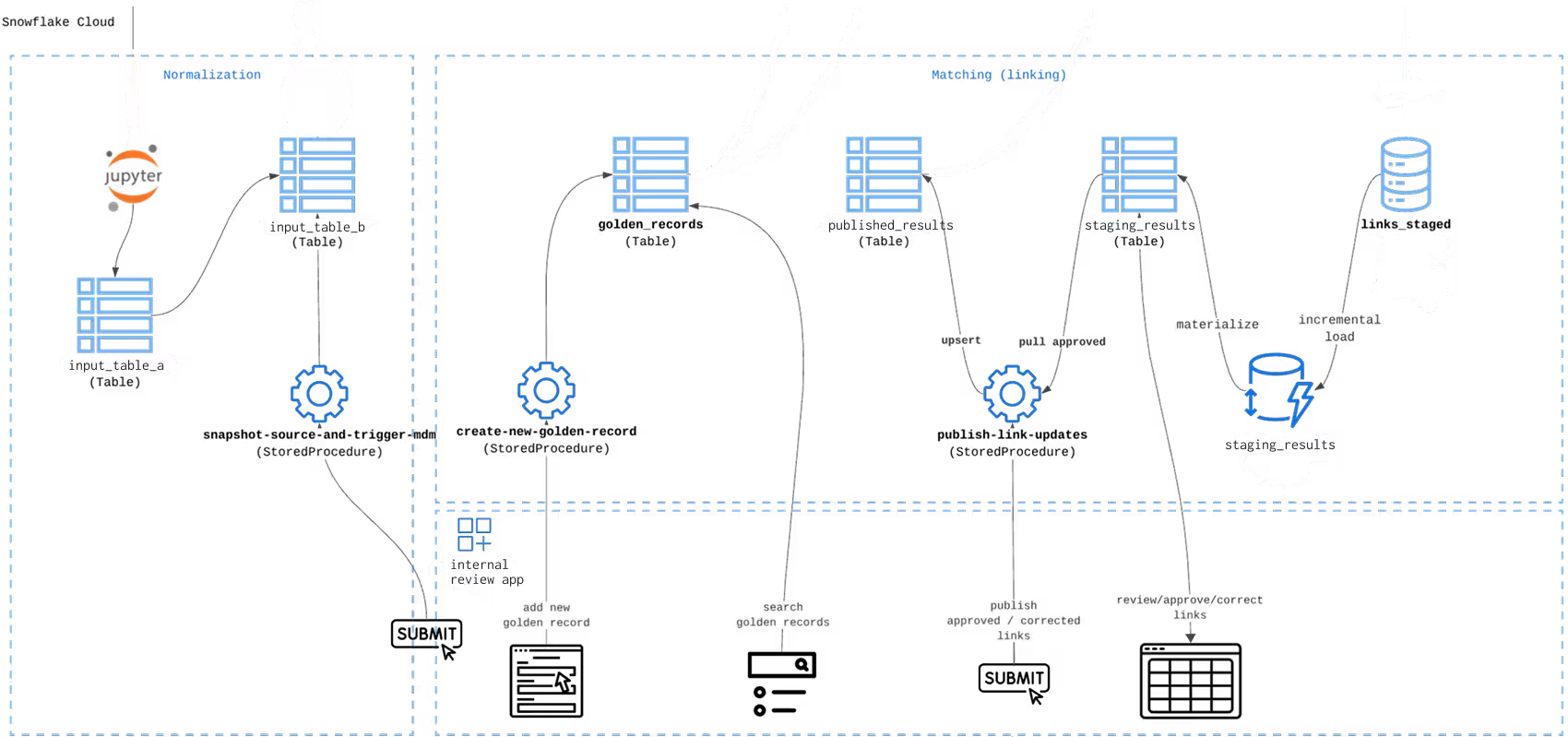

Pipelines clean, standardize, and enrich incoming datasets inside Snowflake. The curated outputs are then delivered to systems that serve the application layer — APIs, operational databases, analytics tools, or product services.In many architectures, Snowflake prepares the data while another system (such as Postgres or a service layer) handles low-latency reads for user-facing workflows.

No. Snowflake is typically one part of the architecture. Most production pipelines also involve ingestion tools, orchestration jobs, storage layers, and downstream serving systems.

A typical implementation may include Snowflake for transformations, object storage for intermediate datasets, orchestration tools for scheduling jobs, and operational databases or APIs for application access.

We design the full flow rather than limiting work to the warehouse.

Yes. This is a common situation.

Many teams have transformation logic scattered across SQL scripts, notebooks, or manual processes. The task is usually not inventing new logic but turning existing rules into a structured pipeline.

We break large scripts into clear transformation stages, convert them into maintainable jobs, and add validation steps so the output stays consistent with the original logic.

This approach preserves domain knowledge while making the pipeline easier to maintain and extend.

Often, yes.

Many pipelines fail because logic, transformations, and delivery layers are tightly coupled. Instead of rewriting the entire system, we usually isolate the unstable parts and restructure them.

Typical improvements include separating ingestion from transformation, introducing validation checks, standardizing schemas, and rebuilding the serving layer while keeping the underlying data logic intact.

This allows teams to stabilize the pipeline without disrupting working components.

Yes. Once the pipeline is running in production, teams usually need support for monitoring, schema changes, source updates, and performance improvements.

Support can include pipeline monitoring, troubleshooting failed runs, adapting to new data sources, and evolving the data model as the product grows.

The goal is to keep the pipeline stable while allowing it to evolve with the system it supports.

A Snowflake data pipeline is a workflow that moves raw data into Snowflake, transforms it into a consistent structure, and delivers curated outputs for analytics, APIs, or operational systems. Pipelines typically include ingestion, transformation logic, validation checks, and delivery to downstream services.

We’ll get back to you right after reading your message. Thank You!

We use cookies to bring best personalized experience for you. Check our Privacy Policy to learn more about how we process your personal data

Accept AllPrivacy is important to us, so you have the option of disabling certain types of storage that may not be necessary for the basic functioning of the website. Blocking categories may impact your experience on the website. More information